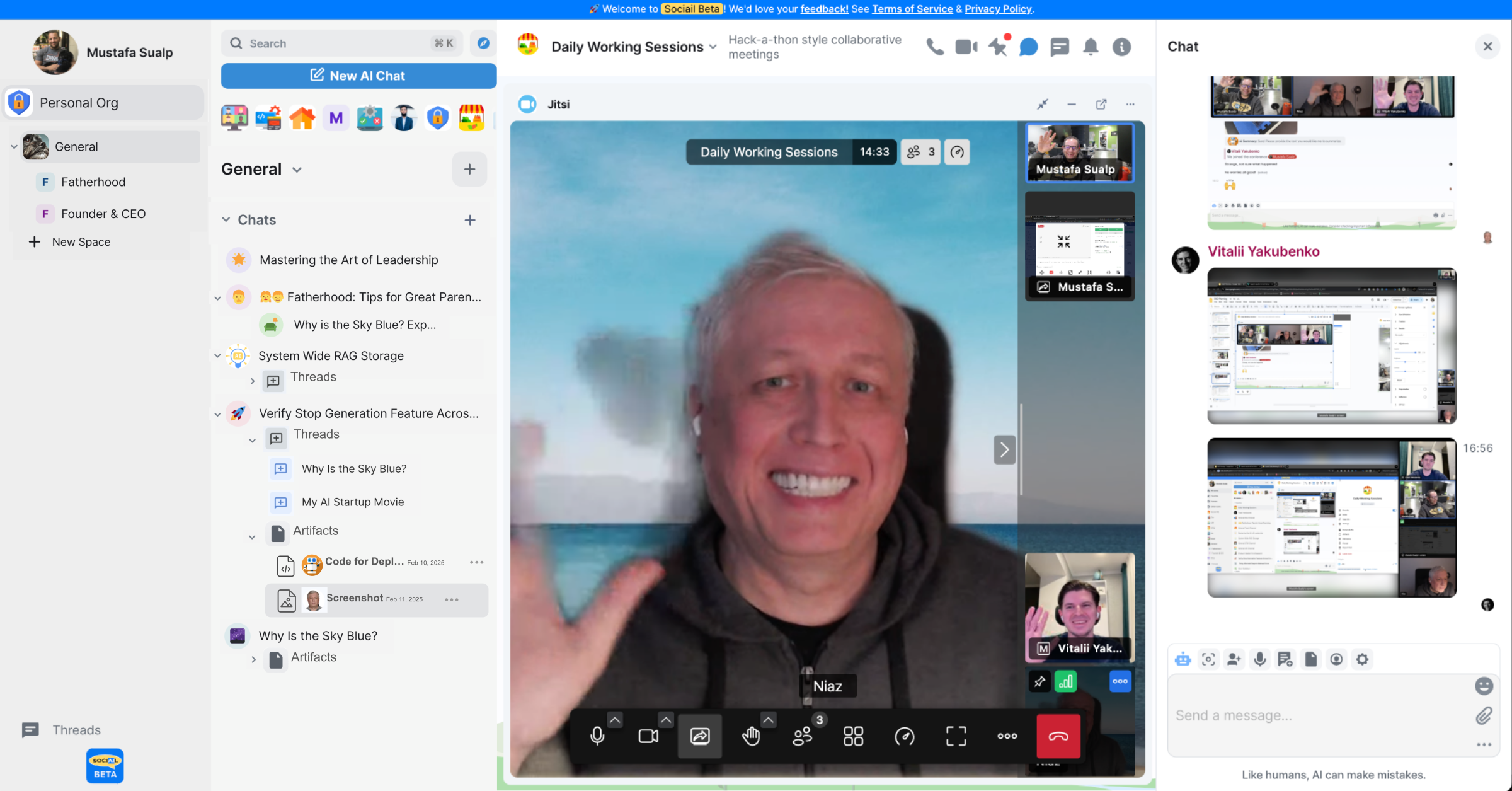

Start in a shared room

People, AI teammates, conversation, and context begin in the same workspace.

Your workspace for shared context and durable work

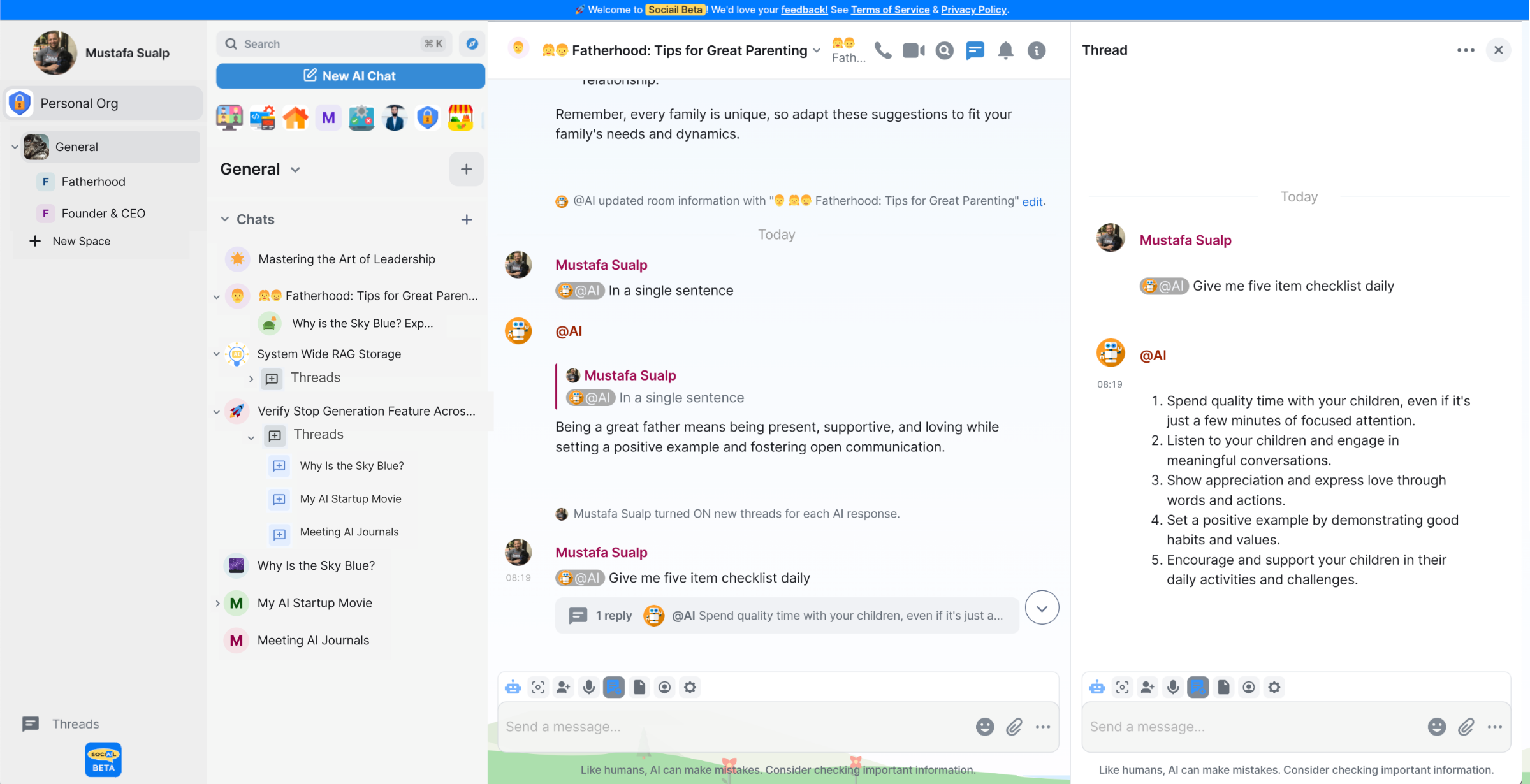

Sociail starts with shared AI rooms: humans and AI working in the same context, producing useful outputs, keeping privacy and trust visible, and giving every feature a clear help path.

People, AI teammates, conversation, and context begin in the same workspace.

AI participates where the work is happening instead of disappearing into a separate tab.

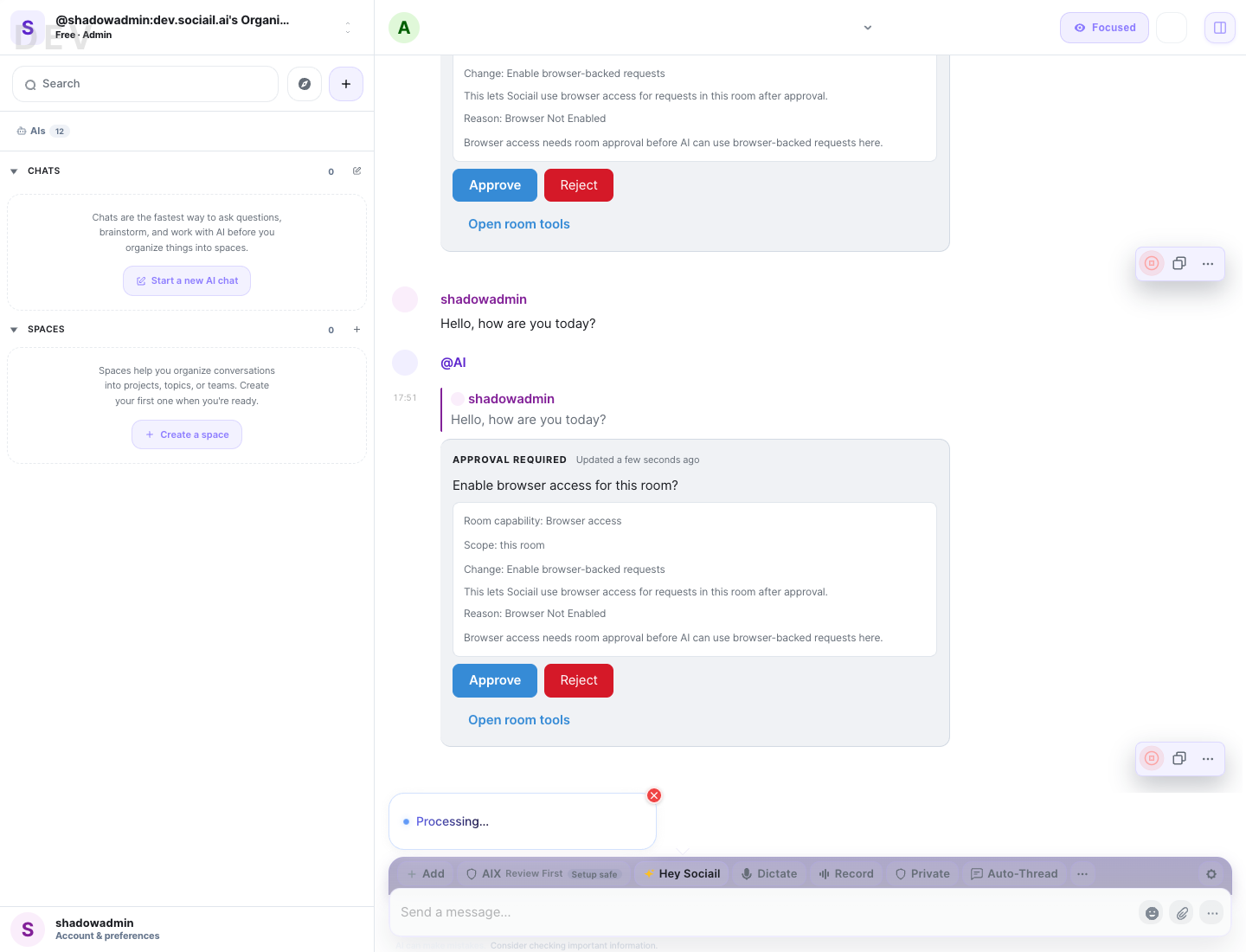

Approvals, blocked states, receipts, and support paths stay close to the action.

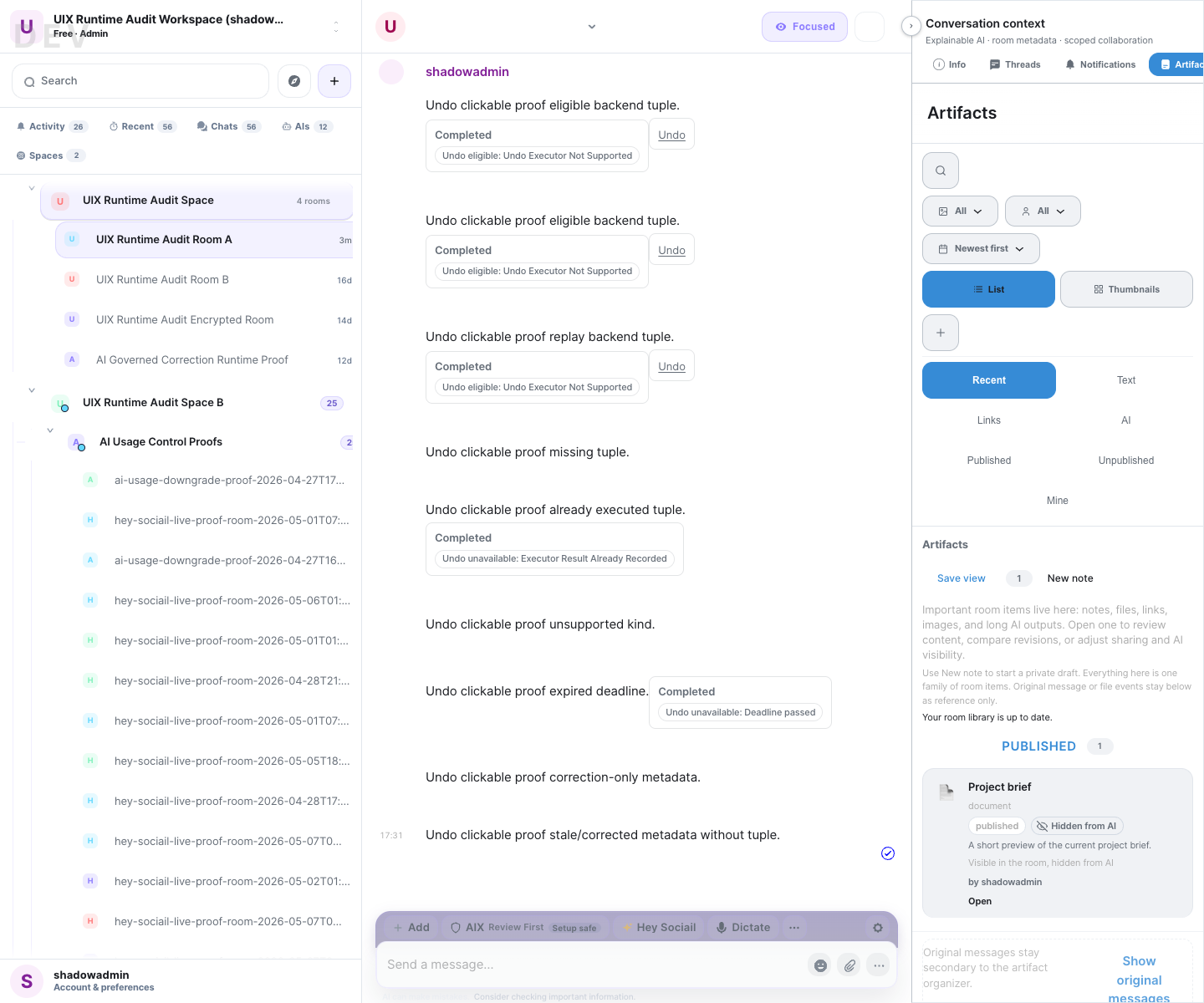

Artifacts, settings, and workspace controls help the day end with something durable.

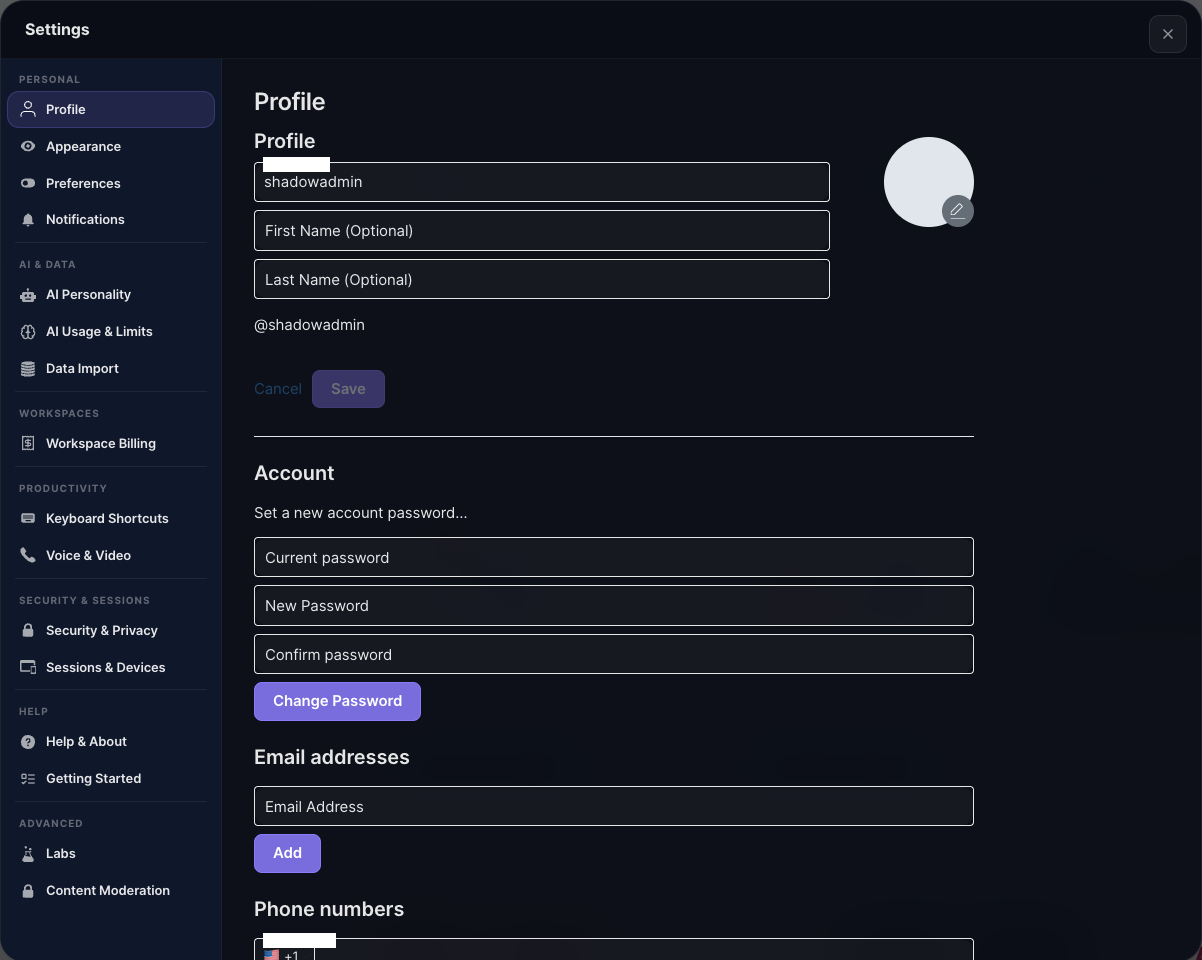

Privacy belongs inside the way each surface works: who can see AI, what context it can use, what it can hear or transcribe, what it can do, and where sensitive support goes.

People should be able to tell when AI is present, responding, blocked, or waiting for review.

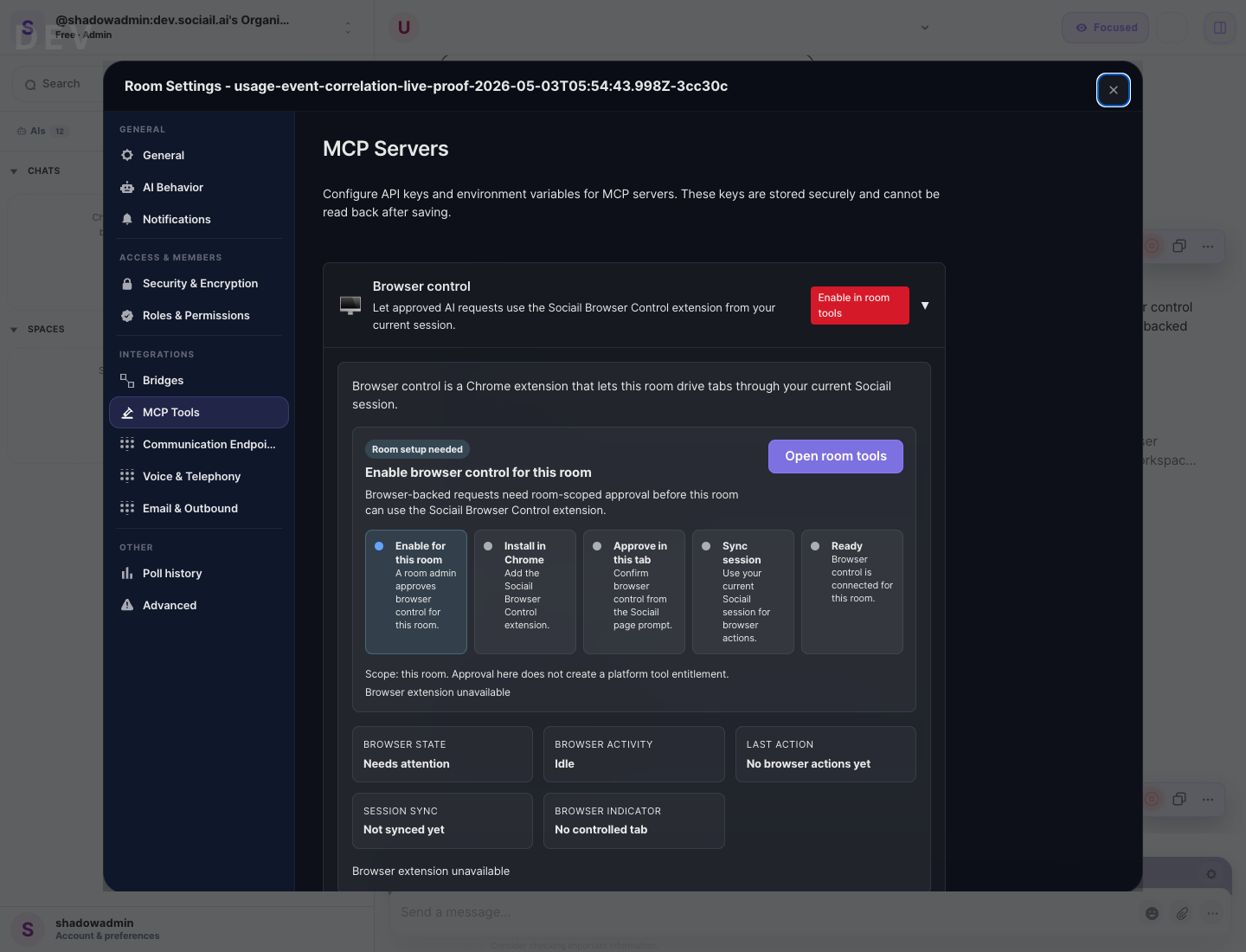

Rooms, artifacts, browser help, support, and future data surfaces carry their own boundaries.

Hey Sociail voice and meeting AI need visible state, wake mode, and separate listen, speak, transcript, and action permissions.

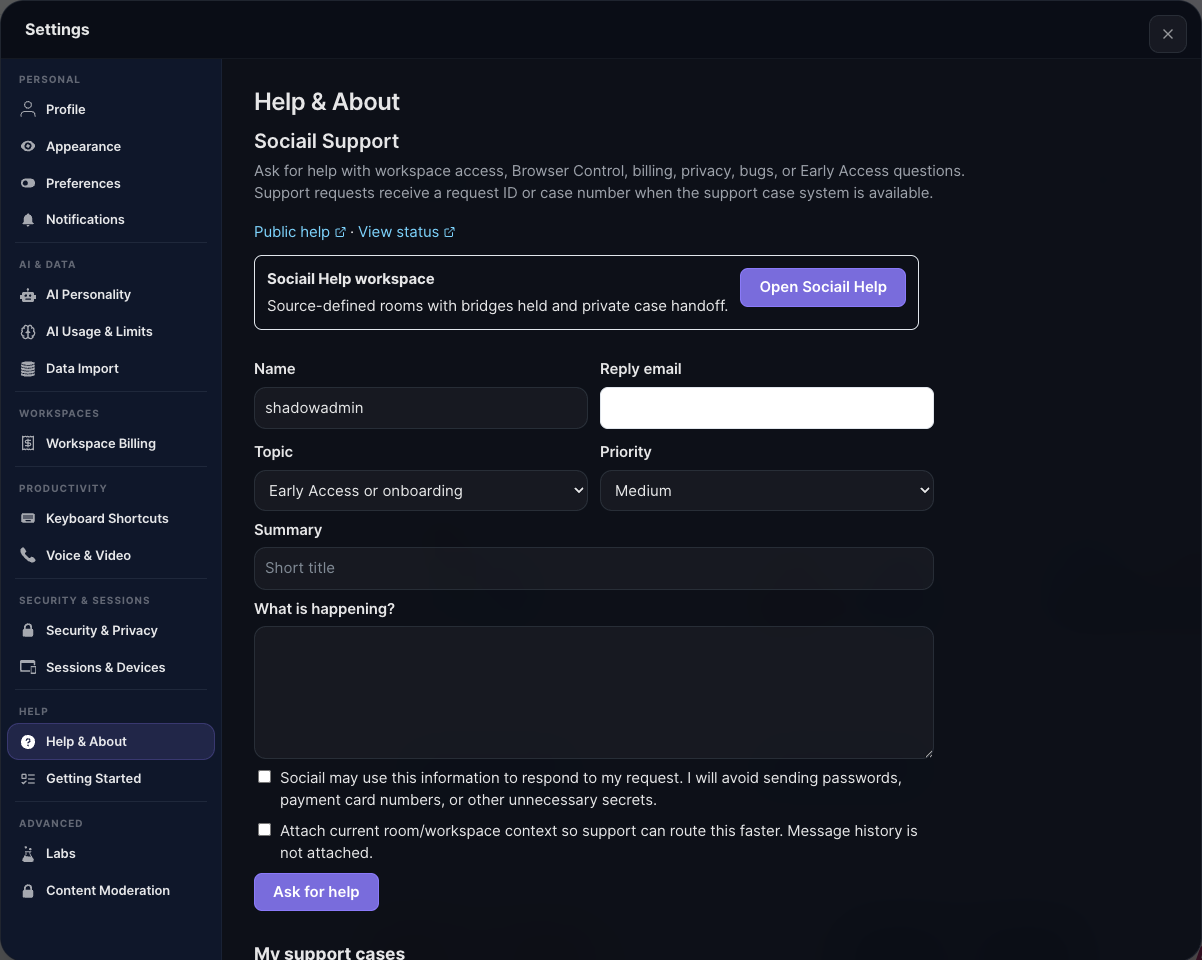

Billing, security, account, workspace ownership, and sensitive setup issues route to private support.

The point is not to become another chat tab or another document cabinet. Sociail is the collaborative AI workspace where people, AI, context, outputs, and control stay together.

Team conversation

Sociail keeps people, AI teammates, decisions, and follow-up in the same room instead of splitting work across disconnected threads.

Workspace memory

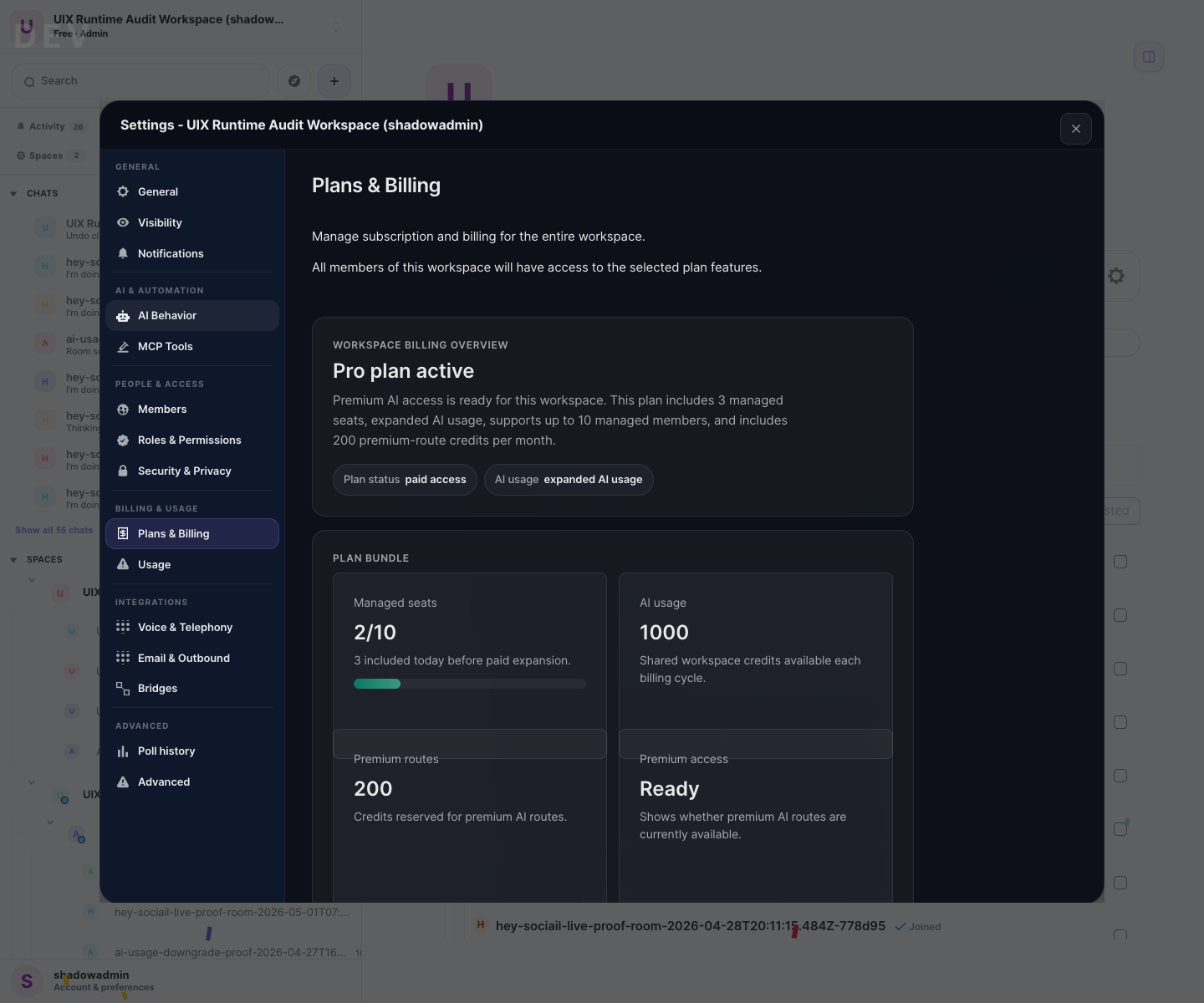

Artifacts, settings, usage, and proof-gated Room Data give the work a place to live after the chat scrolls away.

AI assistance

AI help belongs inside the workspace where context, participants, approvals, and outcomes are already visible.

Sociail layer

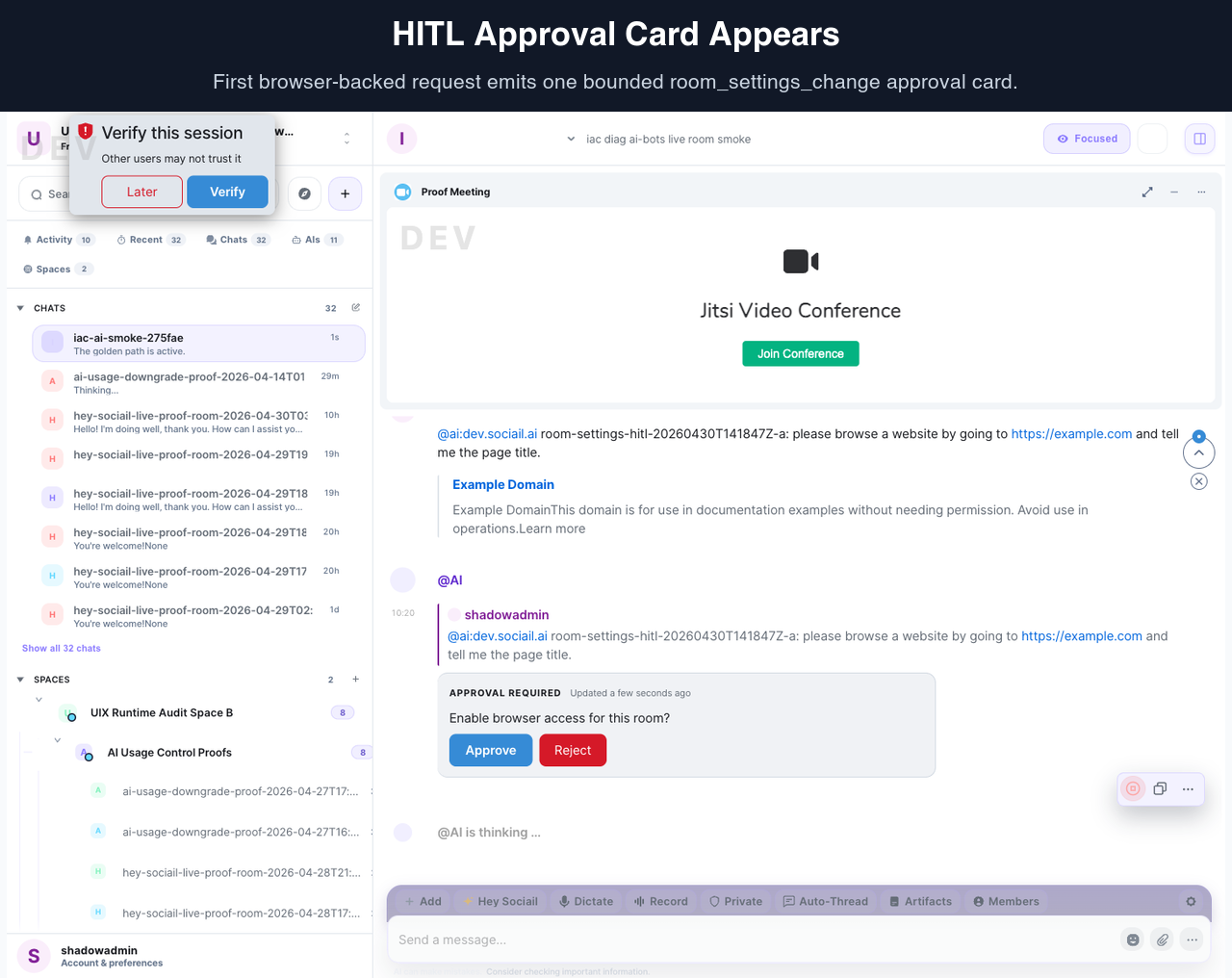

Approvals, receipts, boundaries, support, and cost-aware gates make shared AI collaboration easier to trust.

The portal separates what users can evaluate today from proof-gated work and later platform primitives. That keeps the vision visible without asking users to decode internal plans.

The surfaces we can show with real product proof now: rooms, AI teammates, Hey Sociail voice, artifacts, trust, Browser Control, Sociail Me seed settings, workspace controls, and support.

Work that is being hardened behind pilots or internal proof gates before it becomes public-facing: Room Data, managed endpoints, memory safety, and deeper Sociail Me guidance.

Ambitious primitives that matter to the thesis but stay gated until trust, security, proof, support, and pricing are ready.

These pages use real product screenshots, explain the user outcome, and name current boundaries. They are the right starting point for evaluating Sociail today.

Launch-visible

One shared place for people, AI teammates, context, and work outputs.

Launch-visible

Bring AI into the room as a visible participant in shared work.

Proof-backed

Talk to Sociail in the room when voice is enabled.

Launch-visible

Keep useful outputs attached to the room, with reviewable state.

Launch-visible

Show users what needs review before AI-assisted work crosses a boundary.

Proof-backed

Let a Sociail AI teammate help with browser pages you choose.

Seed surface

Make user identity, preferences, and AI boundaries easier to shape.

Launch support

Keep plan, usage, members, and access state visible to workspace owners.

Launch support

Keep support close to the product, with private paths for sensitive issues.

These are close enough to explain directionally, but they remain gated until product proof, consent, support, pricing, and operational controls are ready.

P1 gated alpha

A pilot-gated way to turn files or tables into reviewed room data with staged changes, receipts, correction, and forget paths.

Proof-gated

Governed communication endpoints for support and design-partner workflows, with consent, cost, and usage controls.

Post-seed

Guided preference and boundary setting so personal AI collaboration can become more useful without hidden learning claims.

Meeting-gated

A disclosure-first meeting lane for visible AI presence, wake invocation, transcript state, recap drafts, and review-first follow-up.

Sociail has a broader platform thesis, but later primitives do not become public promises until the trust, privacy, implementation, and support model is ready.

Template-backed AI teammates with scoped roles, boundaries, receipts, and room reuse.

Observable agent coordination that can propose work while humans keep consent and commitment authority.

Secret-backed capabilities where AI receives handles and permissions rather than raw credentials.

Request Early Access to evaluate the shared-room experience, then use help or support when setup, trust state, or workspace access needs attention.